NEW! – The Most Complete, Comprehensive and Detailed Golf Gear Reviews PERIOD!

Ever since I started the MGS network of sites my goal has been the same for all of them. To do my best to make something better then what was already out there or to fill a need the that the industry had not already met for the consumer. Which brings us to our article today…and it is about product reviews. Typically before golfers are ready to pull the trigger on a driver or iron set purchase a large majority of them look to the internet to research their future purchase.

This usually includes a trip to Google and searching for a phrase something similar to this “Taylormade R9 Driver Review” or maybe “Titleist Vokey Wedge Review”. Well both of those you would think would give you the answer you are looking for…right…actually it will result in hundreds of thousands of results for you to sift through. Or if you are an avid golf gear head internet guru then you already know 4 or 5 sites that have some of the better reviews of golf products out there. Which is great…but the majority of the time you have to piece the info from all of them together to get the EXACT information YOU are looking for…which can take hours sometimes even days.

MOST WANTED

So we decided that we needed to try and fix this. We wanted to have the ULTIMATE GOLF EQUIPMENT REVIEWS on the web for golf equipment…so what does this exactly mean…this means that we feel our new review system will be so thorough that this will be the LAST site you need to search for to get your answers to help you make a better informed decision.

So it is now our stated goal here at MGS to provide the most complete, comprehensive, and detailed golf club reviews you will find anywhere. Of course, it’s also important to us that our readers know exactly what we test and how we test it. We’ll always include the full club specifications in all of our reviews, but we also think it’s important that we’re upfront, open and honest about all of our testing methodologies. Any deviation from these, our standard testing protocols, will be disclosed in the review itself. We may be spies, but we don’t believe in keeping secrets about the equipment we review.

What We Test

The evidence suggests that the majority of golfers still buy their equipment “off the rack”. In many cases, even so-called “custom fitting” options involve little more than guessing at what the most appropriate shaft flex might be. With that in mind, when MGS requests equipment for testing, we ask the manufacturer to send us the loft and shaft combination (from the list of available “stock” options) that best fits our pool of primary testers. Because our new SpecCheck system is based on each manufacturer’s stated specifications, we ask for all clubs to be “standard” for length, loft, and lie.

From time to time we might find a compelling reason to request a club with a non-standard configuration, however; we fully expect these instances will be rare, and any change from stock will be fully disclosed. The overwhelming majority of our tests will be conducted on stock equipment; the same stuff you’d buy off the rack at your local sporting goods store.

How We Test

All testing for MyGolfSpy Ultimate Reviews are done using PGA TOUR Simulators, powered by 3Trac, from aboutGolf. Testing takes place at Tark’s Indoor Golf Club; a state-of-the-art golf training, club fitting and repair facility located in Saratoga Springs, NY.

Total Scores and awards are calculated based on feedback and scores in the following areas:

* SpecCheck (25%)

SpecCheck is something brand new at MyGolfSpy and something we felt other internet and magazine golf equipment review sites were missing. While we certainly don’t think it’s our place to tell you (or the manufacturers), what the proper specifications of a golf club should be (although we will point it out when published specifications fall outside of industry norms), we do think that it’s important that the product each and every manufacturer delivers matches their own stated specifications for a given club or set of clubs. The goal of SpecCheck is to find out if the clubs you buy are the clubs you get.

The overall SpecCheck score will be calculated based on the results of the following tests:

- Lie/Loft (irons only) – Each and every club within a set of irons is measured for loft and lie on a STEELCLUB® Plus Angle Machine from Mitchell Golf.. For each iron that measures outside of MyGolfSpy’s acceptable tolerances (.5° for both lie and loft), points are deducted on a sliding scale.

- Length – All clubs we receive will be measured for length. We’ve seen far too many off the rack clubs measure ½” or more shorter (or longer) than the manufacturer’s stated specifications. Our acceptable tolerance for club length is a fairly liberal 1/8″. Points will be deducted for any club measured 1/8″ or more longer or shorter than the stated length.

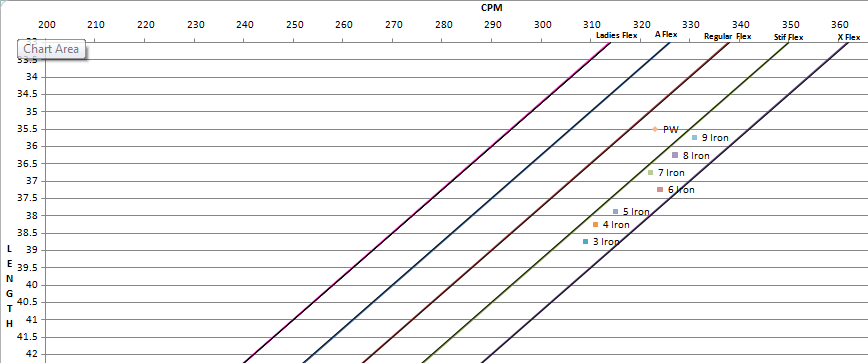

- Frequency (Flex) – While there is no industry standard for shaft flex, there are widely accepted ranges. MyGolfSpy uses a DigiFlex Frequency Meter from Mitchell golf to measure shaft frequency. For any shaft that falls more than 4 CPM outside of our frequency range for the specified flex (in most cases “stiff”), points will be deducted.

* In Depth Look – For iron sets, we will graph the frequency of each iron. Ideally each iron in a set should be 4 CPM (Cycles Per Minute) from the next.

** Super Detailed Look – Although there is no direct impact on the score, we will also make note of oscillation patterns, especially in those cases where a club (or set) performs particularly well, or particularly poorly.

- SwingWeight – Each club we test will be placed on a swingweight scale to measure the actual swingweight as compared to the published specifications. MyGolfSpy’s stated tolerance is 1 swing weight +/- the stated specs. For any club for which the actual swing weight falls outside our tolerances, points are deducted on a sliding scale.

A Word About Tolerances

MyGolfSpy understands that in most cases industry-wide and individual manufacturer tolerances are less strict than our own. With this consideration in mind; for each spec we measure, points are deducted on a sliding scale. As an example, a club that is 1.5° out of spec is penalized more heavily then one 1° (the industry standard) out of spec. In this respect, even though our own tolerances are tighter, the standard is applied equally. Indeed, our tolerances are strict, but believe that for a club to receive a perfect SpecCheck score, it should be perfect.

We think that those manufacturers who deliver their products within stricter tolerances, and as 100% as specified, should be rewarded for their higher standards and commitment to quality.

It is our belief that each and every club we test should receive 100% of the points available through SpecCheck. Achieving a perfect score is as simple as giving the consumer exactly what you say you are giving them.

Sample SpecCheck Chart

SpecCheck Frequency Analysis

SpecCheck accounts for 25% of the total score.

* Subjective Feedback (25%)

Users will be asked to rate the club(s) in each of the following categories:

Looks – Testers will be asked to rate the aesthetics of the clubs on a scale of 1-5 (with 1 being hideously ugly, and 5 representing an exceptionally beautiful club).

Feel – Users are asked to rate the feel of the club on a scale of 1-5 (poor-excellent).

Sound – (woods and hybrids only)

Although sound and feel are closely related, we feel it’s important to separate them as much as possible. When we can, an audio sample of impact will be included in the review.

To ensure that our results are as balanced as possible, subjective testing is conducted using Bridgestone B330-RX golf balls with ball flight tracking disabled (users have no indication of how long, or how straight their shots are flying). By using the Bridgestone B330-RX we’re able to provide sound and feel testing consistent with what the golfer would likely experience on the golf course.

Additionally, testers are not informed of the results of our SpecCheck. We believe by isolating subjective testing from SpecCheck, as well as from our distance and accuracy testing, we can prevent other factors from influencing the outcome of our subjective ratings.

To further separate the subjective from the performance driven, MyGolfSpy uses a two survey method to collect data. Rather than wait until performance testing has been completed, golfers are required to complete an initial subjective analysis survey prior to start of performance testing.

After performance testing has been completed, users are asked to complete a second survey, which in additional to illicing more detailed thoughts and comments for inclusion in our review, asks the golfer to provide an overall value rating; based on performance as it relates to cost.

Subjective Testing accounts for 25% of the total score.

* Performance Data (50%)

While we think our subjective testing and SpecCheck are important, it’s performance that matters most when you decide what to put in your bag. For this reason,

Performance testing accounts for 50% of the overall score.

How We Test Performance

First, we think it’s important to acknowledge that no ball flight analysis system is perfect. Radar and other outdoor testing systems can be influenced by wind, temperature, humidity, and even distance above sea level. Indoor systems are not without their quirks as well. While we’d love to provide perfect, irrefutable data, we don’t believe that system exists today. What we can provide is consistency.

MyGolfSpy has partnered with Tarks Indoor Golf in Saratoga Springs, NY. All performance data collection (as well as SpecCheck) will take place at Tark’s on their state-of-the-art PGA TOUR 3Trak equipped simulators from aboutGolf. We believe aboutGolf provides the most advanced, and accurate simulator technology available on the market today – and we’ve talked to hundreds of golfers who share this opinion.

Most importantly, the aboutGolf PGA Simulators at Tark’s Indoor Golf provide us the ability to test year round in a consistent and controlled environment, which is especially beneficial when providing the detailed “apples to apples” comparisons you’ll come to expect from our future reviews.

Simulator Configuration – During our tests, the following simulator options are configured:

- Windspeed: calm

- Fairways: dry.

Testing Procedures – Our procedures vary slightly depending on the type of review we’re doing. In each case a small number of golfers representing low, mid, and high handicap golfers is selected from our general pool of testers and asked to participate in more detailed peformance testing. When possible we try and find golfers who generate a wide range of ball speeds.

For individual club reviews, testers are asked to hit a series of shots with both their current club(s), as well as the club we’re reviewing. The results are compared side by side. Scores are based largely on whether the test club(s) meets, under, or outperforms the users current club(s).

For head to head competitions, users are asked to hit a series of shots with each of the clubs being tested. Data is collected for side by side comparisons and points are awarded accordingly.

To make the tests as fair and reliable as possible (and to account for the occasional simulator mis-reads) a shot will ocassionally be removed from the dataset. These shots include:

Obvious mis-reads

- Shots with missing data

- Shots with negative spin rates (indicative of a mis-read)

- Well struck balls where the result exceeds the realistic capabilities of our golfer

- For example: a well struck shot 30 yards longer/shorter than any other

Grossly mis-hit balls

- Anything hit off the crown (sky balls)

- Balls struck with the extreme bottom of the clubface

- Severe slices or hooks that land in the wooded areas of the virtual driving range

Although not included specifically in the ratings, forgiveness at either end of the spectrum will be discussed in the review.

All valid shots will be included on a per-golfer basis to calculate averages for:

- Carry Distance

- Total Distance

- Ball Speed

- Launch Angle

- Spin Rate

- Deviation from the target line

For every review the results will be posted in table form.

Performance Table for MGS SuperComp5 Driver (*example)

Finally, each review will include a summary detailing what we liked, didn’t like, as well as our overall thoughts on the club(s), including. For individual club reviews a final score will be awarded. For head to head reviews, we’ll include ranking information from our surveys and Gold, Silver, and Bronze medals will be awarded. Unlike some other publications, advertising dollars will have no impact on our rankings, and we promise that, failing an absolute tie in the overall score, only one gold medal will be awarded.

RT

13 years ago

The rating system you used convinced me to purchase the Cobra ZL driver this year and I love it, keep it up!